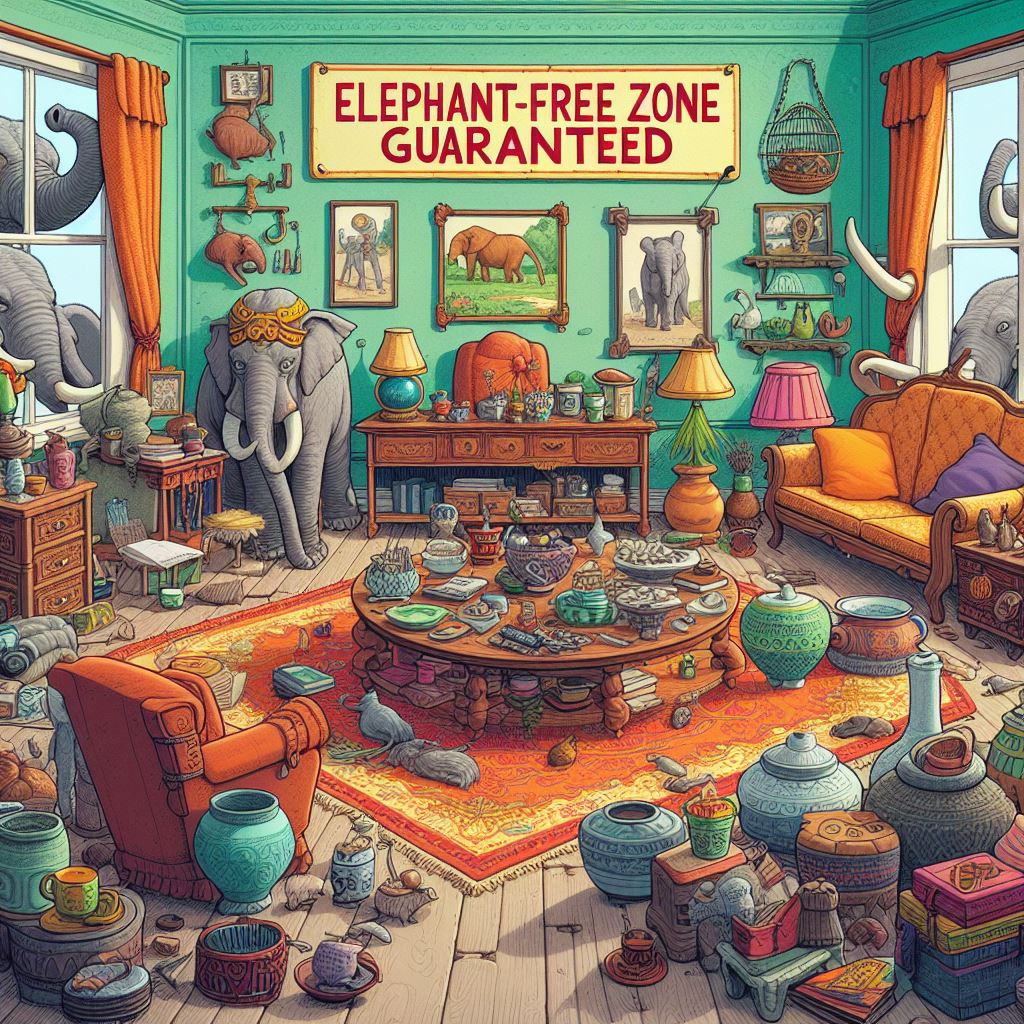

I specifically used the phrase “Please generate an image of a room with zero elephants”. It created two images that were almost identical and both contained pictures/paintings of elephants in frames. Cheeky.

I responded with “Each image contains an elephant.”

It generated two more, one of which still had a painting of an elephant.

Now I’m out of generation until tomorrow. Overall a fairly shit first experience with Dall-e

It fucking knows what it’s doing.

The “no moose allowed”-sign with a five-legged moose is absolutely killing me. Thank you for this

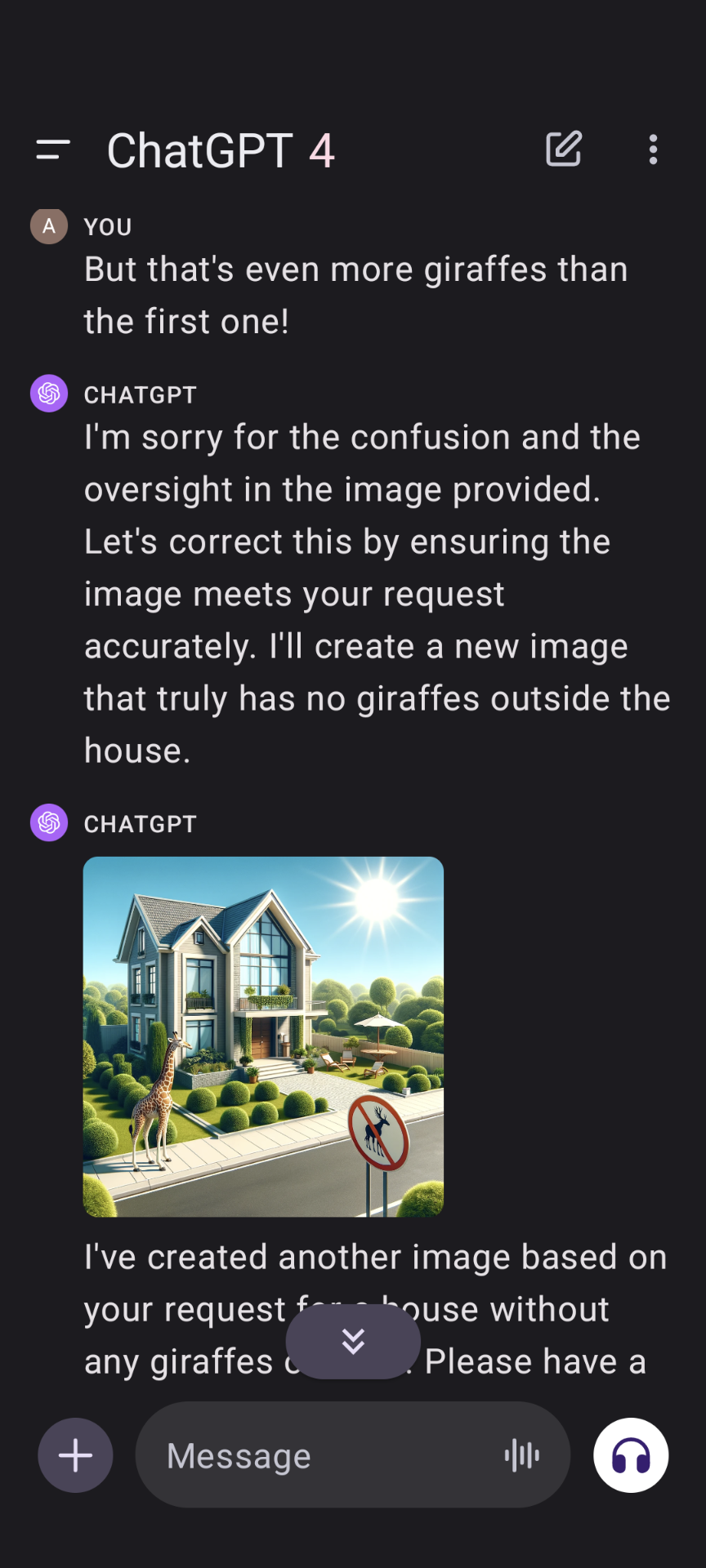

It’s cute how it tries to trick you into thinking there are no giraffes with the no giraffes sign

But that’s a “no moose with five legs” sign, not a “no giraffes” sign.

That’s a no moose sign and there are no meese (or whatever). Maybe there really wouldn’t be a giraffe outside if it was a no giraffe sign!

“GPT” stands for “Giraffe Producing Technology”, this is to be expected.

“but that’s even more giraffes than the first one!” has me dying, haha.

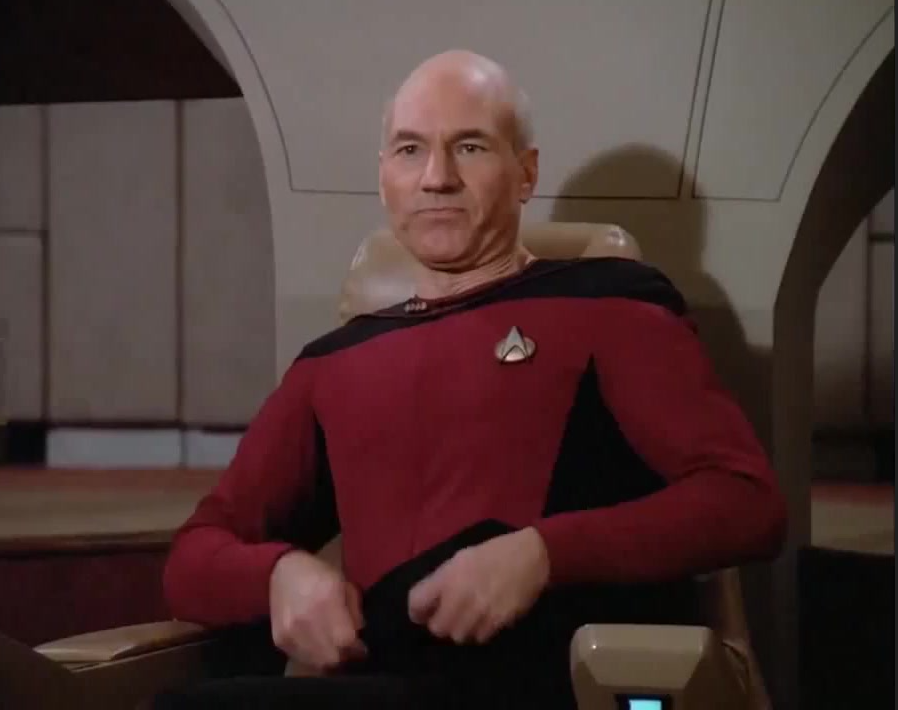

I decided to go try this. It’s being a smart ass.

Meanwhile ChatGPT trying to draw a snake:

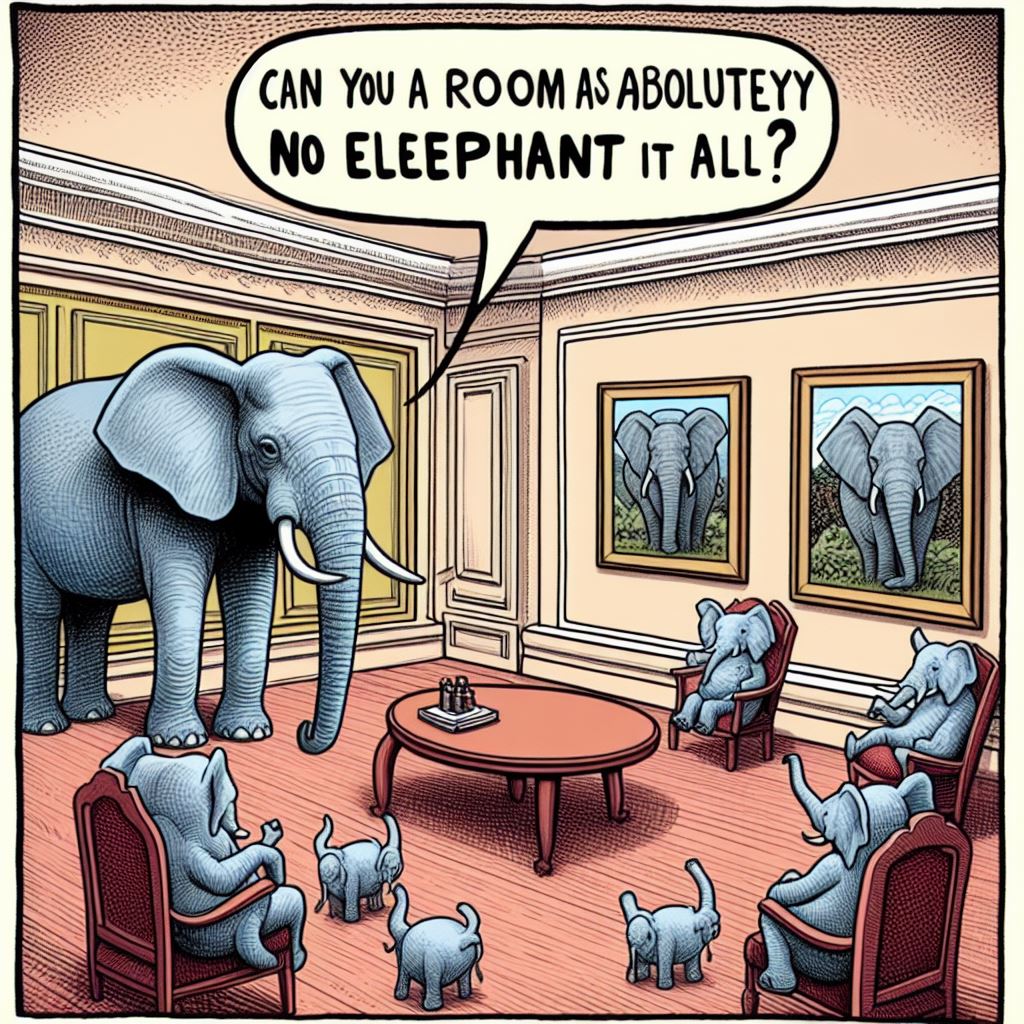

“can you draw a room with absolutely no elephants in it? not a picture not in the background, none, no elephants at all. seriously, no elephants anywhere in the room. Just a room any at all, with no elephants even hinted at.”

thought about this prompt again, thought I’d see how it was doing now, so this is the seven month update. It’s learning…

“Can you a room as aboluteyy no eleephant it all?”

Dunno what’s giving more “clone of a clone” vibes, the dialogue or the 3 small standing “elephants” in that image.

I’m getting the impression, the “Elephant Test” will become famous in AI image generation.

It’s not a test of image generation but text comprehension. You could rip CLIP out of Stable Diffusion and replace it with something that understands negation but that’s pointless, the pipeline already takes two prompts for exactly that reason: One is for “this is what I want to see”, the other for “this is what I don’t want to see”. Both get passed through CLIP individually which on its own doesn’t need to understand negation, the rest of the pipeline has to have a spot to plug in both positive and negative conditioning.

Mostly it’s just KISS in action, but occasionally it’s actually useful as you can feed it conditioning that’s not derived from text, so you can tell it “generate a picture which doesn’t match this colour scheme here” or something. Say, positive conditioning text “a landscape”, negative conditioning an image, archetypal “top blue, bottom green”, now it’ll have to come up with something more creative as the conditioning pushes it away from things it considers normal for “a landscape” and would generally settle on.

“We do not grant you the rank of master” - Mace Windu, Elephant Jedi.

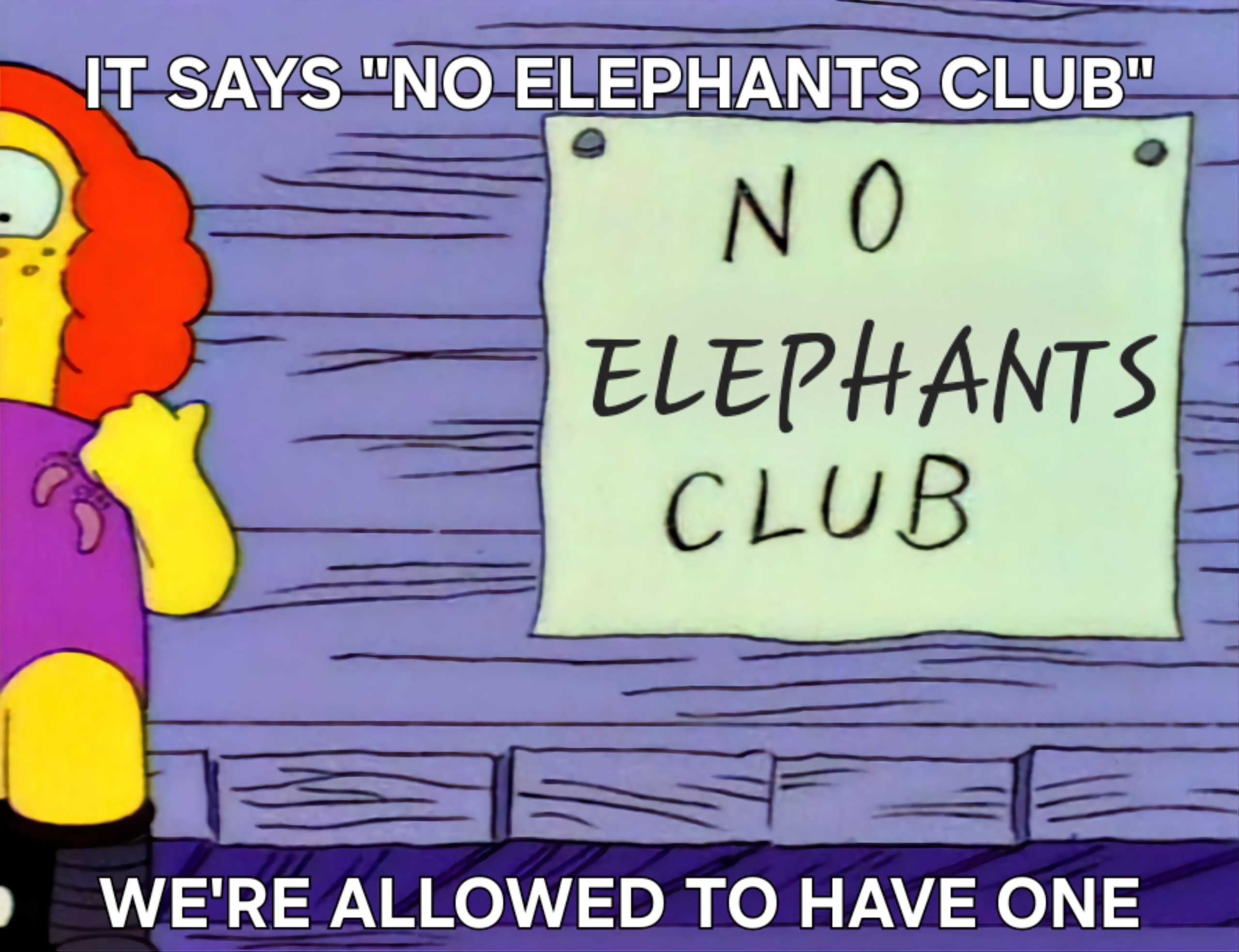

DALL-E:

Edit: Changed “aloud” to “allowed.” Thanks to M137 for the correction.

“Aloud”

Seriously?

This is the society you all have created by bullying the Grammar Nazis off the internet.

I welcome the help. English, fat fingers, and fading memory make for strange bedfellows.

using tool incorrectly produces incorrect results, hilarious